VentureBeat presents: AI Unleashed – An exclusive executive event for enterprise data leaders. Network and learn with industry peers. Learn More

Runway, the well-funded New York City-based generative AI video startup, updated its signature text/image/video-to-video model Gen-2 today, and many of its users are collectively freaking out over how good it is. Numerous AI filmmakers have called it “game changing,” and some a “pivotal moment in generative AI.“

Specifically, Gen-2 has undergone “major improvements to both the fidelity and consistency of video results,” according to Runway’s official account on the social network X (formerly Twitter).

“It’s a significant step forward,” posted Jamie Umpherson, Runway’s head of creative, on X. “For fidelity. For consistency. For anyone, anywhere with a story to tell.”

How the new Gen-2 update works

Originally unveiled in March 2023, Gen-2 improved on Runway’s Gen-1 model by allowing users to type text prompts to generate new four-second-long videos from scratch through its proprietary AI foundation model, or to upload images which Gen-2 could add motion to. Gen-1 required you to upload an existing video clip.

Event

AI Unleashed

An exclusive invite-only evening of insights and networking, designed for senior enterprise executives overseeing data stacks and strategies.

In August, the company added an option to extend AI generated videos in Gen-2 with new motion up to 18 seconds.

In September, Runway further updated Gen-2 with new features it collectively called “Director Mode,” allowing users to choose the direction and intensity/speed of the “camera” movement in their Runway AI-generated videos.

Of course, there is no actual camera filming these videos — instead these movements are simulated to represent what it would be like to hold a real camera and film a scene, but the content is all created by Runway’s Gen-2 model on the fly. For example, users can zoom in or out quickly on an object, or pan left or right around a subject in their video, or even add motion selectively to a person’s face or a vehicle, all in the web application or iOS app.

Today, the company’s new update adds even smoother, sharper, higher-definition and more realistic motion to completely AI generated subjects or still image subjects. According to one AI artist, @TomLikesRobots on X, the resolution of Gen-2 generated videos from still images has been upgraded from 1792×1024 to 2816×1536.

By uploading AI-generated still imagery created by another source, say Midjourney, AI creatives and filmmakers are able to generate entire AI productions, albeit short ones, from scratch. But by stitching together short, 18-second long clips, AI filmmakers have already created some compelling longer works, including a music video screening in cinemas.

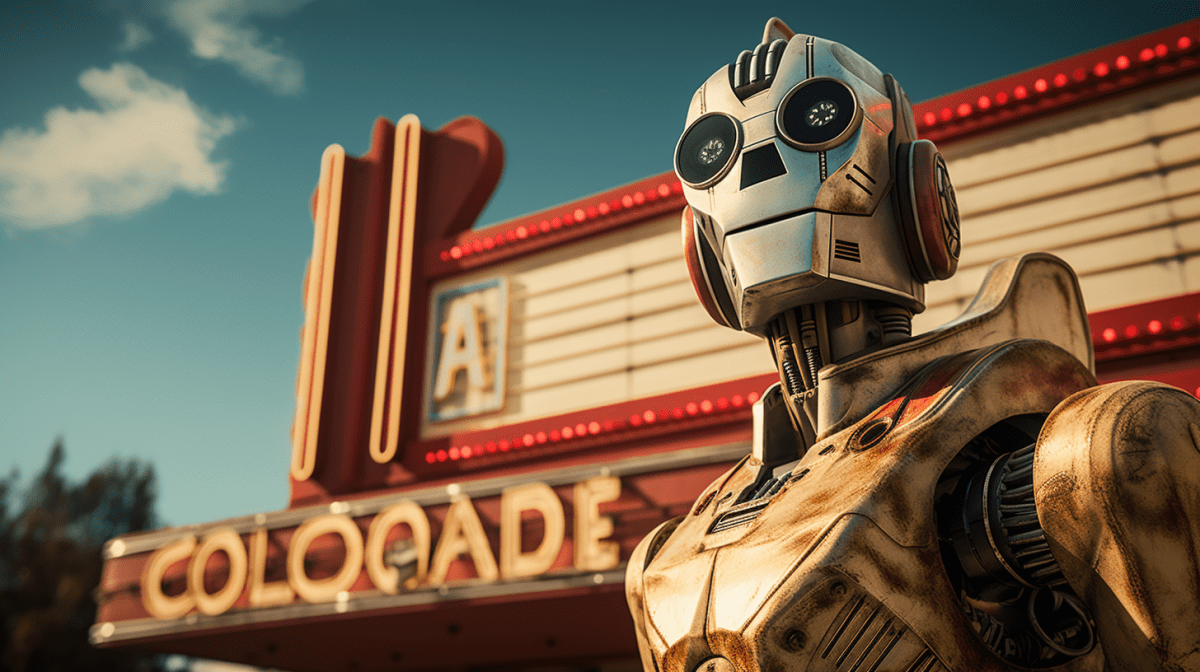

Check out some of the new videos that have been generated with the Gen-2 update and posted to X below:

‘Creative software is dead’

Runway’s founder and CEO Cristóbal Valenzuela, known for being a charismatic and thoughtful evangelist of AI and an early follower of the technology going back to the days of Google’s DeepDream models back in 2015, is understandably bullish on his company’s new update.

Taking to X, he wrote that “Technology is a tool that allows us to tell stories and create worlds beyond our imagination.”

He later posted a thread of messages on X beginning with the proclamation “Creative software is dead.”

While undeniably a bold proclamation, in his follow-up messages, Valenzuela added nuance, explaining that previous software allowed human users to manually create by “pushing pixels,” with tools.

By contrast, AI powered apps and models like Runway’s Gen-2 instead do that manual work for us now, and the user simply directs the machines at a higher level with natural language or by adjusting parameters. The tools themselves now do more of the work, as they are capable of understanding and manipulating the underlying media in a way previous software was not.

Clearly, Valenzuela and many of Runway’s fellow employees and users have been inspired by the Gen-2 update. Just how far their technology goes remains to be seen, but early indications are that AI filmmaking is emerging as a major creative force for this century, perhaps not dissimilar to the way the original physical filmmaking took off in the 1920s, becoming mass entertainment.

The fact that this update came at the same time as the major Hollywood actors union remains on strike and in tense negotiations with studios over AI being used to create digital twins of actors or potentially replace them entirely — as Gen-2 can, at least for short, silent films — is itself an incredible irony.

VentureBeat’s mission is to be a digital town square for technical decision-makers to gain knowledge about transformative enterprise technology and transact. Discover our Briefings.