Blackshark.ai has already made a digital twin of the Earth, and its next play further democratizes the hitherto lofty (if you will) world of geospatial intelligence. Continuing the nautical theme, its Orca Huntr tool is an AI-powered tool for finding and tracking anything from orbit — and it’s so simple that a child, or even a Member of Congress, could use it.

The company also announced $15 million in new funding that should help kick start this new line of business.

The startup was born out of the gaming industry, bringing a fresh perspective to the matters of interpreting and using orbital and aerial imagery. We wrote in 2020 the detailed story of how they created the digital twin of Earth, but the short version is that they built a central system for interpreting imagery that varied widely over time and origin.

“From day one, we had to design technology flexible enough to digest all this data,” Putz told me in an interview about the new feature and funding. And now they’re working on a way for people to get more value out of this digital Earth without needing a PhD in machine learning or even a few years of coding experience.

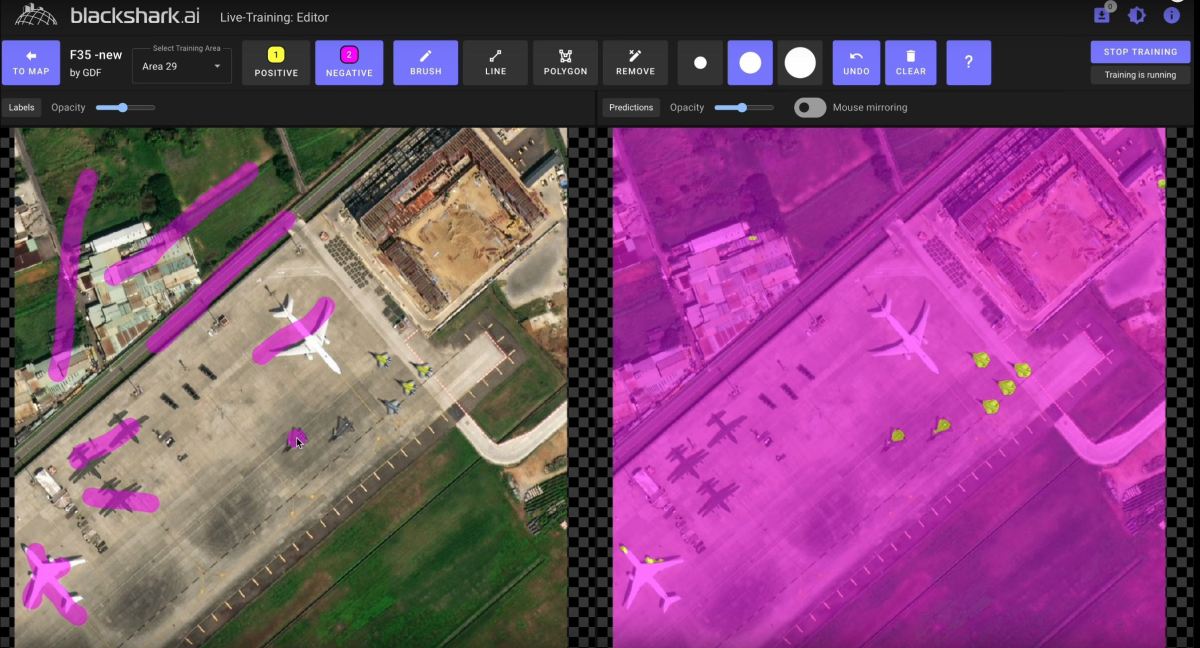

Obviously no-code geospatial AI data play is something of a buzzword bingo, but once you see the product in action it’s easy to see how it could make waves. The complexity of the product is hidden behind an almost ludicrously simple interface: you scribble on the part of the image you want to find more of, and the system finds more of that thing, pretty much instantly. That’s… it.

Really! Look:

Labeling objects with a few brush strokes refines the model in near real time. After doing this you could have the model label all the F-35s in the state. Image Credits: Blackshark.ai

Well, there’s a little more to it than that. You can also scribble in a different color in the negative space to say “not this part,” and there are a few knobs to twiddle for advanced features. But the entire point of Orca Huntr was to allow anyone who can see a feature and scribble on it to, essentially, build a machine learning engine to detect every other instance of it — every instance in the imagery you upload, or on the planet if you’re feeling expansive.

Say you wanted to identify all the buildings with solar panels on their roofs. Scribble on them. Trying to outline burned areas in wildfire zones? Scribble on them. Counting fishing boats in an area of the ocean? Scribble. And of course, want to identify locations of adversary assets? Scribble on them in a secure area.

Ordinarily this type of object detection requires a bit more time, effort, and expertise. The labeling process for large scale imagery is not particularly modern or efficient, and when done at scale is often outsourced — a multi-billion dollar industry. Asking a firm to go through a hundred images and painstakingly label every river, then training a computer vision model on those and getting it all back to you, can take weeks or more, plus a fair amount of money. And if you’re doing sensitive work like military intelligence, you have to do it all internally, which means having a team capable of doing so, and most don’t.

During a demo of the technology, Putz explained how their approach differs, beyond the UI, from those annotation and object recognition services offered by competitors.

“We’re not trying to fully automate it; we’re using an intelligent human in the loop, which other solutions don’t want. But the AI is never 100% accurate, so you refine it… but everyone else goes the traditional way of doing labeling,” he said, which is to say sending it off to the professionals (and by extension, whoever they pass it off to). “Orca makes it no code and simple, more accurate and secure — any user that can hold a mouse can detect anything.”

If the jet-detection algorithm you get is only 80% accurate, you used to have to send it back to the maker with another hundred annotated images and wait for them to get back to you. With Orca Huntr, it might literally be as simple as adding one more brush stroke — the model updates in real time and you can see whether it’s better. It’s an evolution of the very tools they used (and declined to give details on at the time) during the creation of the Earth twin for Microsoft’s flight simulator.

“You actually start to learn how AI works, and what training and annotation means, and what you have to do to get good results,” Putz said. “It’s actually fun to train with it — it’s like a challenge, to see how many brush strokes you need.”

It vastly simplifies and accelerates the common task of “find all the X in this set of images,” which is of equal interest to realtors and developers as it is to governments, scientists, and military types. You might think that providing this capability would allow anyone to do the necessary work themselves, but Putz said there’s actually been an increase in clients asking for soup-to-nuts solutions.

“We tried to say we only provide this thin horizontal layer, but we found that customers always eventually want us to verticalize,” he said. For example, a wind farm developer could use the tool to find areas with the right features for placing a dozen turbines. But they want more than that and may simply provide the imagery and constraints to Blackshark.ai, asking not only that the company find likely spots, but create line-of-sight visualizations and other real world considerations.

Example of a 3d visualization of turbines at a given location.

“We can put a wind park anywhere on the planet in 3D, then put it into Unreal [Engine] and show the mayor of a town how from this terrace or town square it wouldn’t be visible,” Putz said.

It’s also governments from around the world that, despite the simplicity of the new interface, just don’t have the familiarity with this type of work that’s needed just yet. Investments in AI and data over the last decade have had mixed results, with many tools and platforms not panning out or proving too expensive or limiting.

“For myself, a key learning was how important governments are as clients – and it’s not just three-letter agencies, it’s the forest and coastal agencies — I mean, they’re the ones taking care of the planet,” he said.

It helps that the company is the only one with access to investor Maxar’s archive of satellite data, which covers numerous eras and satellite types, making it invaluable not just for training models but for augmenting and contextualizing other datasets. Putz cited the resilience of its systems and the ability to handle lots of different data types without breaking down is a core strength of the company.

Image Credits: Blackshark.ai

That versatility may even allow it to generalize outside orbital imagery. A model for creating models, which is what Orca Huntr amounts to, might very well work for things other than pictures of the planet. It might be able to learn and report objects or features in microscopic industrial or medical imagery.

“I think it could work in radiology, doctors marking tumors and so on. I just reached out to a friendly investor to introduce me to OEMs to test it out there. And we got a very interesting request from a hard drive manufacturer,” he noted. (When I asked about whale spotting, he said it was possible given the right imagery, and “We had a gentlemen already asking for Penguin colonies in Antarctica,” which are famously visible from space.)

The new features were partly made possible by $15 million in new funding from (quoting from press release): “Existing investors Point72 Ventures, M12 Microsoft’s Venture Fund, and Maxar are joined by In-Q-Tel (IQT), Safran, ISAI Cap Venture, Capgemini’s VC Fund managed by ISAI, Einstein Industries Ventures, Interwoven Ventures (formerly ROBO Global Ventures), Ourcrowd, Gaingels and OpAmp Capital.” That brings their total raised to $35 million.

It’s always worth noting when a major tech company, a major space company, and a major… however you might describe In-Q-Tel, are all on the same investment. Clearly there’s a confluence of needs and opportunity here.

You’ll be able to test the tool out yourself come December 4, if you’re a paying customer. The company declined to provide details on how it will be priced.